It Was Never About AI (We Are Not Our Tools)

Why Read This

What Makes This Article Worth Your Time

Summary

What This Article Is About

Eric Markowitz argues that the current anxiety over artificial intelligence is a misdiagnosis. The real problem, he contends, is the financialized economy that Wall Street and Silicon Valley have jointly built — one that prizes short-term efficiency over long-term resilience, treats workers as cost lines rather than value creators, and mistakes the elimination of people for progress. Drawing on a nature metaphor of redwoods and sequoias, Markowitz warns that economies, like ecosystems, that optimise purely for speed grow fragile: the tree that shoots up fastest is the first to fall in a storm.

Markowitz insists that every generation has confronted a version of this technological anxiety — from the printing press to the assembly line to the internet — and that the central question has always been the same: are we our tools, or are we something more? He concludes with a personal credo: that choosing not to use a tool, or choosing how to use it with intention and conscience, is not weakness but the most radical act of moral leadership available. Companies that survive the next era, he believes, will be those that chose meaning over margin and kept their people.

Key Points

Main Takeaways

AI Is a Mirror, Not the Problem

AI has not created the crisis of human disposability — it has merely made visible a system that already treated workers as expendable costs long before it arrived.

Speed Breeds Fragility

Nature’s most enduring ecosystems grew slowly and built deep interdependence; economies that chase speed above all else are setting themselves up for collapse when conditions change.

Wall Street and Silicon Valley’s Feedback Loop

The two most powerful forces in the modern economy have formed a self-reinforcing cycle of short-termism, confusing the elimination of people with progress and efficiency with purpose.

This Question Has Always Existed

Every transformative technology — from the printing press to the locomotive to the internet — provoked the same existential question: are we defined by our tools, or do we define them?

Long-Term Winners Refused the Short Game

Jeff Bezos and Warren Buffett built enduring value precisely by refusing to optimise for quarterly earnings — a lesson Wall Street consistently ignores even as it profits from the outcome.

Restraint Is the Radical Act

Choosing not to deploy a tool — or deploying it with conscience rather than convenience — is not weakness but the defining moral leadership decision of this technological moment.

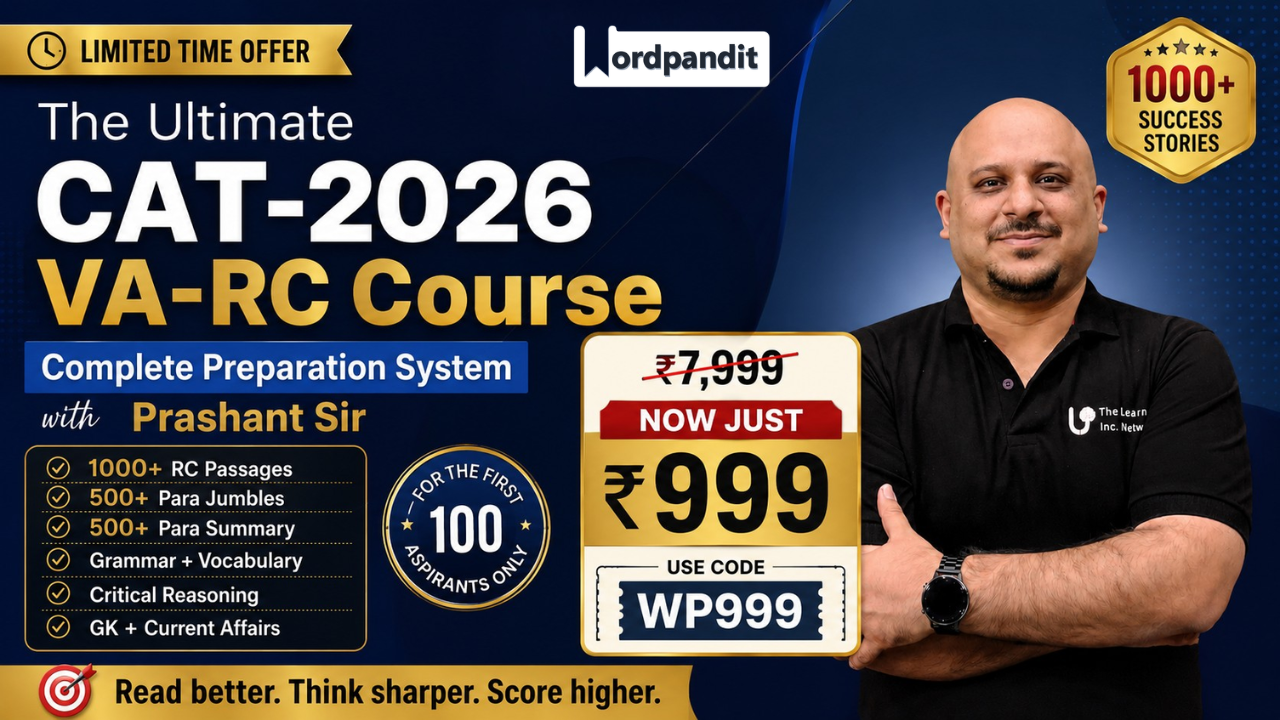

Master Reading Comprehension

Practice with 365 curated articles and 2,400+ questions across 9 RC types.

Article Analysis

Breaking Down the Elements

Main Idea

The Crisis Is Moral, Not Technological

Markowitz’s central claim is that framing the AI moment as a technological problem misses the point entirely. The real issue is a long-standing moral failure: an economy that has systematically devalued human beings in favour of quarterly returns, and a culture that has confused capability with obligation — assuming that because a tool can do something, it must be deployed. AI is simply the latest and most powerful instrument in a system that was already broken, which is why any genuine solution must be ethical and cultural rather than technical or political.

Purpose

To Reframe the AI Debate as a Moral Invitation

This is a passionate manifesto disguised as business commentary. Markowitz’s purpose is not simply to critique AI adoption but to redirect his audience’s attention from fear of a technology to reflection on their own values and choices. He wants leaders, founders, and workers to see this moment not as something happening to them, but as a moral decision they are being asked to make. The article is less interested in what AI can do than in what kind of human beings and institutions we want to be in response to it.

Structure

Vivid Provocation → Nature Metaphor → Historical Pattern → Moral Reframing → Personal Credo

The article opens with a devastatingly concrete anecdote — the 26-year-old analyst whose spreadsheet triggers 3,000 redundancies — to ground an abstract critique in immediate reality. It then shifts to an extended nature metaphor (redwoods, ecosystems, roots) to build its philosophical argument for resilience over speed. A historical survey of past technological panics follows to universalise the question, before Markowitz pivots to direct moral exhortation and closes with an intimate personal statement about his own research assistants. The movement from cold systemic critique to warm personal testimony is deliberately emotionally designed.

Tone

Impassioned, Prophetic & Intimate

Markowitz writes with the controlled urgency of someone who has reached a moral conclusion and wants to be heard — not debated. Phrases like “I need you to hear it” and “building something worth a damn” signal a preacher’s cadence more than a journalist’s. Yet the tone avoids self-righteousness by grounding itself in personal anecdote and genuine vulnerability: his own walk through Portland’s redwoods, his own two research assistants. The result is a piece that reads as a letter to a thoughtful friend rather than a policy memo or academic argument.

Key Terms

Vocabulary from the Article

Click each card to reveal the definition

Build your vocabulary systematically

Each article in our course includes 8-12 vocabulary words with contextual usage.

Tough Words

Challenging Vocabulary

Tap each card to flip and see the definition

Exhibiting fervent, intense, and often uncritical devotion to a cause, belief, or goal — used here to describe the quasi-religious conviction that capability must always be deployed.

“There is an almost zealous religiosity to this idea, and its one that few of us would ever question.”

The rhythm, flow, or modulation of speech or writing; here used to describe the persuasive, sermon-like pattern of a Silicon Valley founder’s public address.

“A founder stands on a stage in a fleece vest and speaks with the cadence of a preacher.”

Relating to or threatening the very existence or fundamental nature of something; used to describe a crisis so profound it calls into question what it means to be human.

“The reason this moment feels so acute, so existential, is not because AI is uniquely powerful.”

A scholar who studies human societies, cultures, and their development — invoked here ironically to suggest that future scholars will find “unlock shareholder value” a bizarre cultural artefact.

“A phrase that should be studied by future anthropologists as one of the great euphemisms of our time.”

The responsible management and care of something entrusted to one’s keeping — used to question what happens to organisational values and purpose when human employees are replaced by AI systems.

“But who stewards the organization when crisis hits? What values does it hold?”

In a manner expressing exuberant, almost childlike delight — used pointedly to describe how the most successful long-term business leaders deliberately and happily dismissed short-term Wall Street pressure.

“The most successful companies of the last generation are the ones whose leaders very specifically, almost gleefully, denied short-term profits.”

Reading Comprehension

Test Your Understanding

5 questions covering different RC question types

1According to the article, Markowitz believes that choosing not to use a powerful tool — or using it with intention — is itself a form of leadership rather than a sign of weakness.

2What does Markowitz mean when he says AI is “holding up a mirror”?

3Which of the following sentences from the article best expresses the lesson Markowitz draws from the natural world?

4Evaluate each of the following statements based on the article.

Markowitz argues that Jeff Bezos and Warren Buffett succeeded by enthusiastically embracing Wall Street’s short-term earnings expectations and using them to drive long-term planning.

The article suggests that a company that has replaced most of its employees with AI may lack the human judgment and institutional memory needed to navigate a crisis effectively.

Markowitz personally keeps human research assistants rather than replacing them with AI, and cites their value in ways that cannot be fully captured on a balance sheet.

Select True or False for all three statements, then click “Check Answers”

5The article lists several historical technologies — the printing press, locomotive, electricity, the assembly line, the internet — each of which was once predicted to be catastrophic. What is the most likely reason Markowitz includes this list?

FAQ

Frequently Asked Questions

Markowitz argues that financial markets and the technology industry reinforce each other’s worst tendencies. Investors pressure companies to maximise short-term shareholder returns, which incentivises executives to cut headcount. Silicon Valley builds the tools that make those cuts possible — and frames the resulting human displacement as progress and innovation. The two systems are mutually self-reinforcing: Wall Street provides capital and approval; Silicon Valley provides the ideological cover and the technology. Neither is held accountable for the human cost.

Redwoods and sequoias are among the oldest and most resilient living organisms on Earth, yet they grow extraordinarily slowly and would be completely unmarketable to a venture capitalist — too slow, not scalable. Markowitz uses them to embody the central paradox of his argument: that the qualities which create enduring strength (slow growth, deep roots, interdependence) are precisely the qualities that modern financial culture treats as weaknesses or inefficiencies. Nature, he suggests, offers a wiser model for building organisations than the quarterly earnings calendar does.

Markowitz argues that the logic of “if a human can be replaced, the human must be replaced” represents a moral surrender — not a neutral business decision. It confuses capability with obligation: just because a technology can do something does not mean it should be deployed. By outsourcing that judgment to market efficiency rather than human conscience, leaders are abandoning their responsibility to the people who build their organisations and the communities those organisations serve. This is what Markowitz calls an abdication — a giving up of something that was ours to decide.

Readlite provides curated articles with comprehensive analysis including summaries, key points, vocabulary building, and practice questions across 9 different RC question types. Our Ultimate Reading Course offers 365 articles with 2,400+ questions to systematically improve your reading comprehension skills.

This article is rated Intermediate. The vocabulary is accessible, but the argument works through sustained metaphor, historical analogy, and rhetorical repetition rather than straightforward exposition. Readers need to distinguish between what the author says directly and what he implies through tone, irony, and figurative language — for example, recognising that the redwood metaphor is doing philosophical work, not just decorative colour. This makes it valuable preparation for CAT, GRE, and GMAT passages that use literary or essayistic styles.

“The Long Game” is Eric Markowitz’s business column on Big Think, dedicated to long-term thinking in commerce, leadership, and society. The column’s framing is significant: its entire editorial purpose is to challenge short-termism, which means this article is not a one-off provocation but a consistent expression of Markowitz’s broader project. Readers should note that his arguments — while emotionally compelling — are written from an explicitly anti-short-termism perspective, which shapes which evidence he highlights and which he leaves out.

The Ultimate Reading Course covers 9 RC question types: Multiple Choice, True/False, Multi-Statement T/F, Text Highlight, Fill in the Blanks, Matching, Sequencing, Error Spotting, and Short Answer. This comprehensive coverage prepares you for any reading comprehension format you might encounter.