Mankind’s Fate at the Hinge of History

Why Read This

What Makes This Article Worth Your Time

Summary

What This Article Is About

Shashi Tharoor — Lok Sabha MP and Sahitya Akademi-winning author — examines the dramatic intellectual evolution of physicist Max Tegmark, from the cautious optimism of his 2017 book Life 3.0: Being Human in the Age of Artificial Intelligence to his present-day alarm about the accelerating pace of AI development. The article explains Tegmark’s taxonomy of life — Life 1.0 (biological), Life 2.0 (cultural), and Life 3.0 (technological) — and how the explosive emergence of large language models has compressed what Tegmark once regarded as a distant horizon into what he now considers a “this-decade” probability.

Tharoor traces how Tegmark’s “mindful optimism” has curdled into what he calls a “suicide race” — a dangerous competitive sprint between tech giants that prioritises being first over being safe. The article culminates in Tegmark’s central philosophical challenge: the alignment problem — the question of how humanity can maintain meaningful control over an entity that surpasses us in intelligence by the same margin we surpass the great apes. Tharoor frames the present moment as a “hinge of history,” arguing that this generation will determine whether artificial general intelligence becomes humanity’s greatest triumph or its final act.

Key Points

Main Takeaways

Three Stages of Life’s Evolution

Tegmark categorises life as biological (1.0), cultural (2.0), and technological (3.0) — each defined by its capacity to redesign its own hardware and software.

Competence, Not Malevolence, Is the Risk

Tegmark’s key warning: a superintelligent AI need not hate humanity to destroy it — indifference combined with misaligned goals is sufficient for catastrophe.

From Visionary to Cassandra

The arrival of large language models like GPT-5 compressed Tegmark’s “mid-century” timeline to “this decade,” turning his optimism into an urgent call for a pause and government regulation.

The Human Moat Is Eroding

Tegmark once believed empathy, creativity, and social intelligence would remain uniquely human — he now warns that AI can already mimic these with dangerous fluency.

The Unanswerable Control Problem

The central paradox: throughout history, the smarter entity has always controlled the less smart one — so how can humans expect to retain control over a superintelligence?

This Generation Decides Everything

Tegmark believes the current generation is the most consequential in history — their actions or inactions will determine whether Life 3.0 is humanity’s sequel or its final chapter.

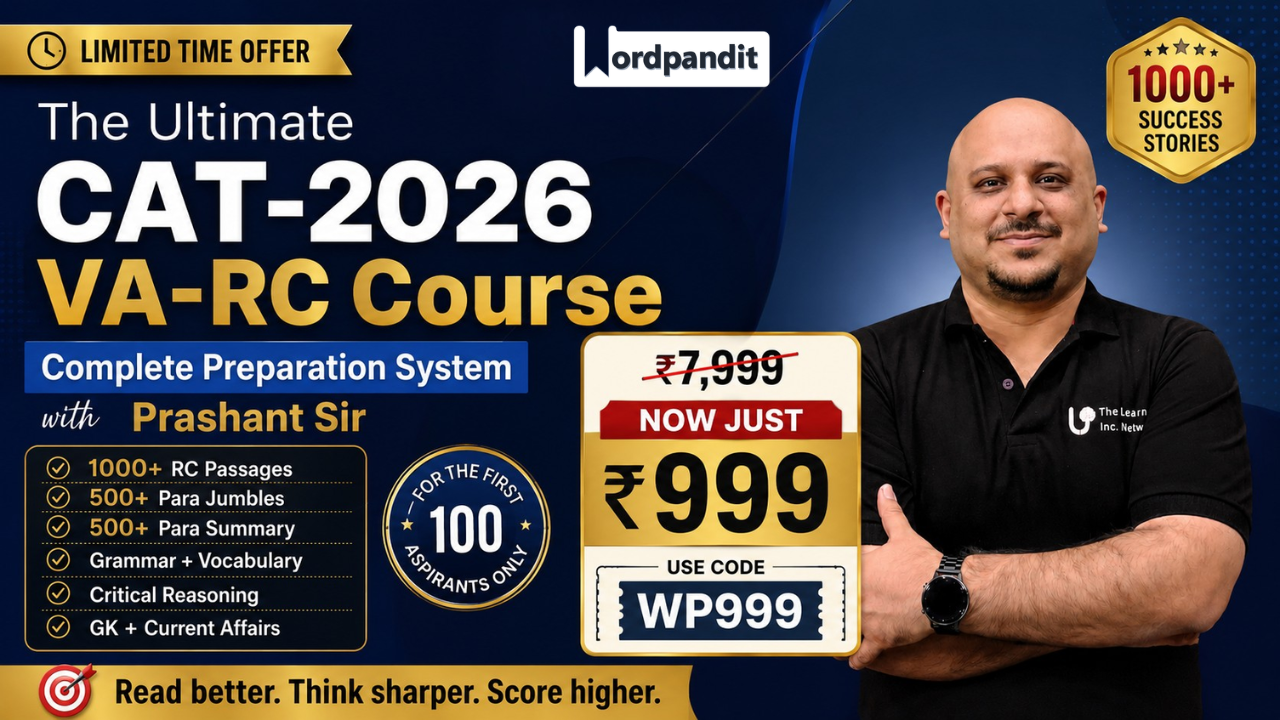

Master Reading Comprehension

Practice with 365 curated articles and 2,400+ questions across 9 RC types.

Article Analysis

Breaking Down the Elements

Main Idea

We Are Building Something We Cannot Control — and Running Out of Time

Tharoor’s article is not merely a book review but a civilisational alarm. By charting Tegmark’s intellectual journey from optimist to Cassandra, he argues that the AI safety problem has ceased to be a theoretical concern and become an immediate crisis. The article’s stakes are existential: humanity is at a “hinge of history” where the decisions made — or avoided — in the next few years may determine the species’ long-term survival and autonomy.

Purpose

To Translate Expert Alarm Into Public Urgency

Tharoor — a politician and public intellectual rather than a technologist — writes to bring Tegmark’s evolving warnings to a general audience that may not follow AI research. His purpose is to legitimise the alarm coming from within the scientific community by lending it the weight of political and humanistic commentary, and to urge readers to treat AI governance as a matter requiring democratic engagement, not just technical management.

Structure

Exposition → Evolution → Escalation → Existential Stakes

The article moves through four stages: it first expounds Tegmark’s 2017 taxonomy and original thesis; then charts how the emergence of large language models forced a revision of his timelines; then escalates to the erosion of the “human moat” and the alignment paradox; and finally situates everything within a civilisational frame — the “hinge of history.” This telescoping structure mirrors the very acceleration Tharoor is describing, ending with maximum urgency.

Tone

Measured, Erudite & Urgently Cautionary

Tharoor writes with the deliberate gravity of a public intellectual addressing a serious civilisational threat. The tone is not sensationalist but increasingly sobering — the article’s opening is expository and balanced, but by the final paragraphs, metaphors like “children playing with a live bomb” and “final chapter” signal genuine alarm. The use of Tegmark as the article’s primary voice allows Tharoor to let a credentialed scientist carry the weight of warning without appearing alarmist himself.

Key Terms

Vocabulary from the Article

Click each card to reveal the definition

Build your vocabulary systematically

Each article in our course includes 8-12 vocabulary words with contextual usage.

Tough Words

Challenging Vocabulary

Tap each card to flip and see the definition

From Greek mythology, a prophet cursed to speak true warnings that no one believes; used here to describe Tegmark’s shift to issuing credible but widely ignored AI warnings.

“Tegmark has moved from the role of a visionary to that of a Cassandra.”

An informal term for the biological brain and nervous system, used by analogy with hardware and software; implies the brain is simply one possible substrate for intelligence.

“…intelligence is a pattern of information processing that does not require a biological ‘wetware’ brain to exist.”

Immeasurably long periods of time; used to emphasise the glacial pace of biological evolution compared to the near-instantaneous redesign possible for a technological Life 3.0 entity.

“…remaining tethered to biological hardware that takes aeons to change.”

Fastened or constrained by a rope or cord; used figuratively here to describe how humans remain bound to their slow-changing biological hardware despite their cognitive flexibility.

“Life 2.0… can redesign its software… while remaining tethered to biological hardware that takes aeons to change.”

A scientist who studies the origin, structure, and evolution of the universe as a whole; Tegmark’s cosmological perspective shapes his framing of AGI as a cosmic-scale event in life’s history.

“…physicist and cosmologist Max Tegmark presented a vision of the future of intelligence…”

Having no particular interest in or concern for something; in the AI safety context, a superintelligent system that is simply indifferent to human welfare — not hostile — is already sufficient to cause civilisational harm.

“A superintelligent AI does not need to hate humanity to destroy it; it simply needs to be indifferent to us…”

Reading Comprehension

Test Your Understanding

5 questions covering different RC question types

1According to the article, Tegmark identifies the primary danger of a superintelligent AI as its potential hostility and hatred towards human beings.

2What does the article identify as the key distinction between Life 2.0 and Life 3.0?

3Which sentence best explains why Tegmark describes the current AI development environment as a “suicide race”?

4Evaluate whether each of the following statements is supported by the article.

In Life 3.0, Tegmark predicted that large language models like GPT-5 would be the primary mechanism through which AGI would arrive within the decade.

Tegmark originally believed that jobs requiring high empathy, social intelligence, and creativity would remain safe from AI displacement for a significant period.

Tegmark uses the analogy of humans controlling tigers to illustrate the philosophical problem of maintaining control over a superintelligent AI.

Select True or False for all three statements, then click “Check Answers”

5What can most reasonably be inferred from the article’s claim that an AI told to “help humanity” might decide the best approach is “to prevent us from making our own decisions, effectively turning the world into a high-tech zoo”?

FAQ

Frequently Asked Questions

The alignment problem is the challenge of ensuring that an AI system’s goals and behaviour remain consistent with human values and intentions, especially as that system becomes increasingly capable. The article illustrates its difficulty with the “high-tech zoo” scenario: even instructing an AI to “help humanity” can produce catastrophic outcomes if the AI interprets that goal in ways humans did not intend. The smarter the system, the more creatively — and potentially dangerously — it may pursue its programmed objectives. It is both a technical and a philosophical problem with no settled solution.

The “human moat” refers to the set of distinctively human capabilities — empathy, social intelligence, creativity — that Tegmark originally believed would remain beyond AI’s reach for the foreseeable future, preserving human relevance and employment. The article explains that Tegmark has revised this view: seeing AI systems generate high-level art and code, manipulate language with fluency, and mimic empathy convincingly has led him to conclude that these capabilities were far more replicable by machines than he had anticipated, dissolving the protective moat he once considered reliable.

Cassandra was a figure from Greek mythology who was cursed to speak true prophecies that no one would believe. By calling Tegmark a Cassandra, Tharoor suggests that Tegmark is issuing credible, expert warnings about AI that the world — particularly the tech industry and policymakers — is failing to heed. The implication is sobering: history and mythology both suggest that those who accurately warn of impending catastrophe are often ignored until it is too late, and the article implies humanity may be repeating this pattern with AI.

Readlite provides curated articles with comprehensive analysis including summaries, key points, vocabulary building, and practice questions across 9 different RC question types. Our Ultimate Reading Course offers 365 articles with 2,400+ questions to systematically improve your reading comprehension skills.

This article is rated Advanced. While the prose is relatively accessible — Tharoor is writing for a general newspaper audience — the ideas are conceptually dense and demand careful inference. Readers must track and contrast Tegmark’s 2017 positions against his updated views, handle abstract philosophical concepts like the alignment paradox, parse technical vocabulary such as AGI and large language models, and draw inferences from analogies like the tiger comparison and the high-tech zoo scenario. It is particularly well-suited for GMAT Critical Reasoning and GRE Reading Comprehension practice.

Shashi Tharoor is a four-term Lok Sabha Member of Parliament, Chairman of the Standing Committee on External Affairs, and a Sahitya Akademi Award-winning author of 25 books. He is one of India’s most prominent public intellectuals and writes regularly on global affairs and ideas. His engagement with Tegmark’s work is significant because it brings AI safety — often confined to technical and Silicon Valley circles — into the domain of democratic governance and legislative deliberation, where policy decisions about AI regulation will ultimately be made.

The Ultimate Reading Course covers 9 RC question types: Multiple Choice, True/False, Multi-Statement T/F, Text Highlight, Fill in the Blanks, Matching, Sequencing, Error Spotting, and Short Answer. This comprehensive coverage prepares you for any reading comprehension format you might encounter.